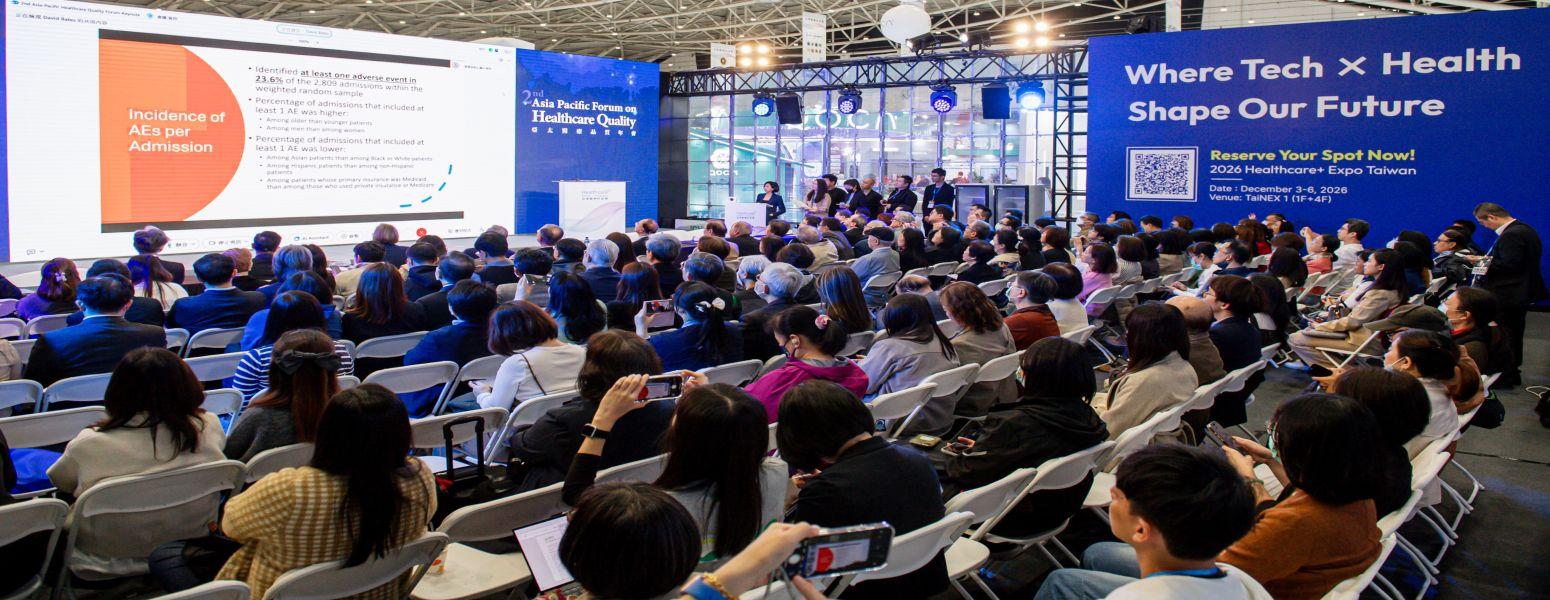

David Bates, board of expert of Newsweek’s World’s Best Hospitals rankings and Professor at Harvard Medical School, was invited to give an online keynote at this year’s Asia-Pacific Healthcare Quality Conference on the implications of artificial intelligence for patient safety.

Bates opened his remarks by challenging a common assumption: “Most people believe the purpose of healthcare is to reduce health risks. Yet an increasing body of research shows that hospitals themselves are high-risk environments.”

Citing findings from SafeCare, published by The New England Journal of Medicine (NEJM), Bates noted that random samples of hospitalized patients reveal that nearly one in four experiences at least one adverse event. This indicates that patient safety failures are not isolated incidents, but systemic risks embedded within healthcare delivery.

With the rise of artificial intelligence, expectations have grown that AI could help predict and prevent harm, ranging from hospital-acquired infections and adverse drug reactions to venous thromboembolism, by supporting earlier risk detection and clinical decision-making.

However, Bates emphasized that AI is not a quick fix. Whether it truly improves patient safety depends on how it is implemented, governed, and continuously monitored.

AI Predicts and Analyses, but False Alerts Remain

According to SafeCare research, traditional patient safety management relies heavily on manual reporting and post-event investigation. Adverse events (AEs) are typically identified, analyzed, and addressed only after harm has occurred, resulting in slow response times and incomplete risk visibility. Yet even in these retrospective data, patterns by age, sex, and ethnicity can be observed, suggesting that AEs are not entirely random.

Bates noted that this is where AI, if applied, can shift healthcare safety from reactive management to predictive governance. In infection control, predictive AI models can analyze electronic medical records, laboratory results, and care process data in near real time to identify infection risks before outbreaks occur.

In medication safety, models can use drug similarity and historical data to anticipate adverse drug reactions and potential drug–drug interactions for individual patients.

In the prevention of venous thromboembolism, AI systems are already capable of identifying high-risk patients and offering personalized preventive recommendations.

Nevertheless, AI can introduce new safety hazards. Bates cited a study published in JAMA Internal Medicine showing that a large-scale AI model used in Epic systems performed poorly in predicting sepsis.

The system generated an overwhelming number of alerts while accurately identifying only a small fraction of true sepsis cases. This not only increased clinicians’ workload but also risked eroding trust in alert systems and undermined patient safety.

Patient Safety Starts With Governing AI

Electronic Clinical Quality Measures (eCQMs) represent one of the more promising applications of AI in healthcare quality and safety. Unlike traditional paper-based or manually collected indicators, eCQMs rely on standardized data that can be automatically extracted from clinical systems. This allows healthcare organizations to monitor safety performance in real time and reduce human error in quality measurement.

From Bates’s perspective, the core issue in patient safety is not whether AI is adopted, but whether organizations can continuously evaluate, refine, and retire models that fail to perform as intended. Patient safety, he stressed, is not solely the responsibility of quality management departments. It must be treated as a shared responsibility across the entire healthcare system.